- SDK

- UI

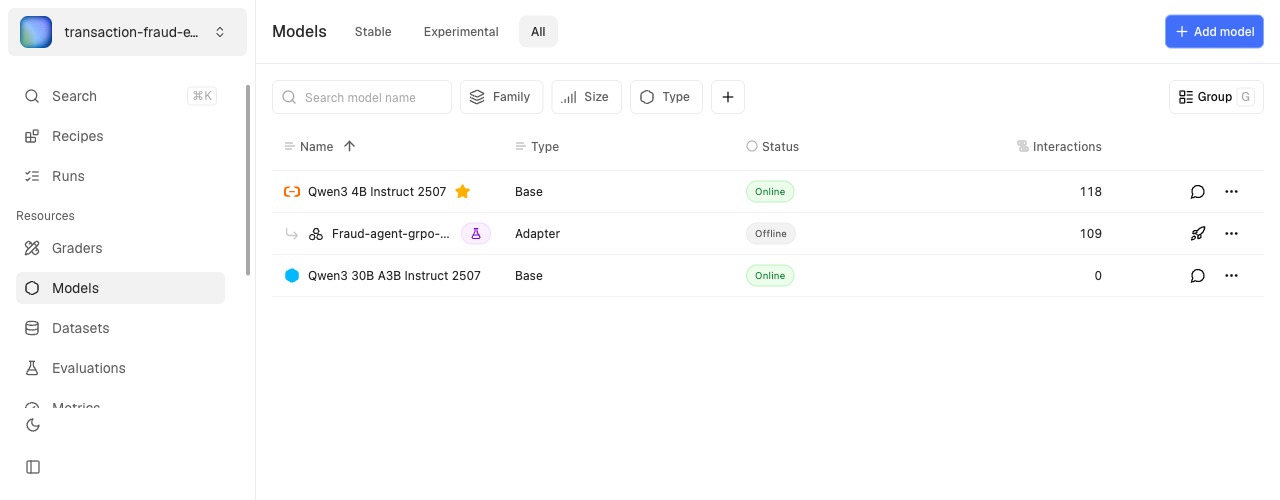

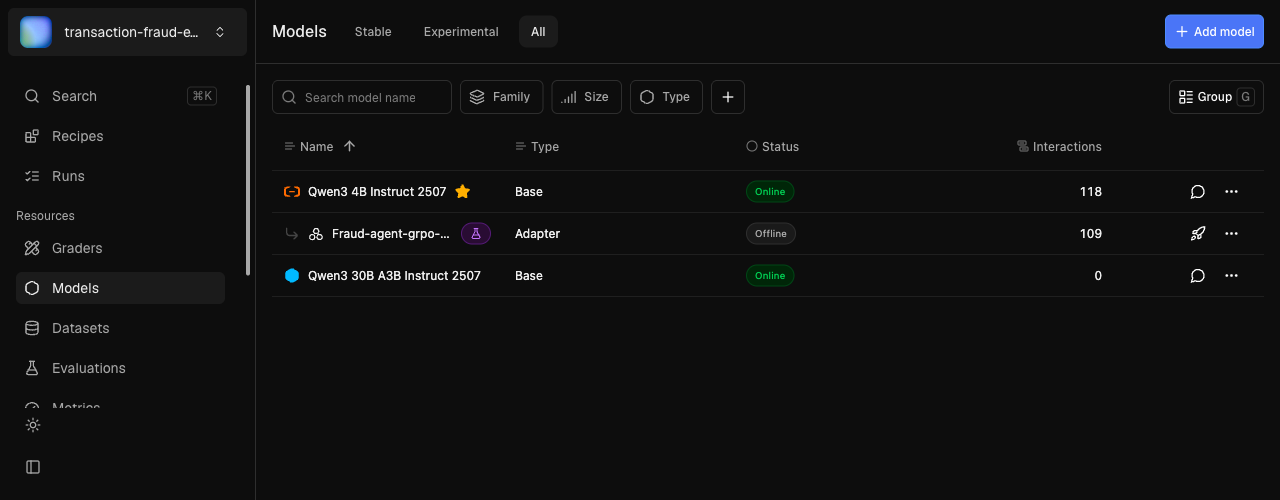

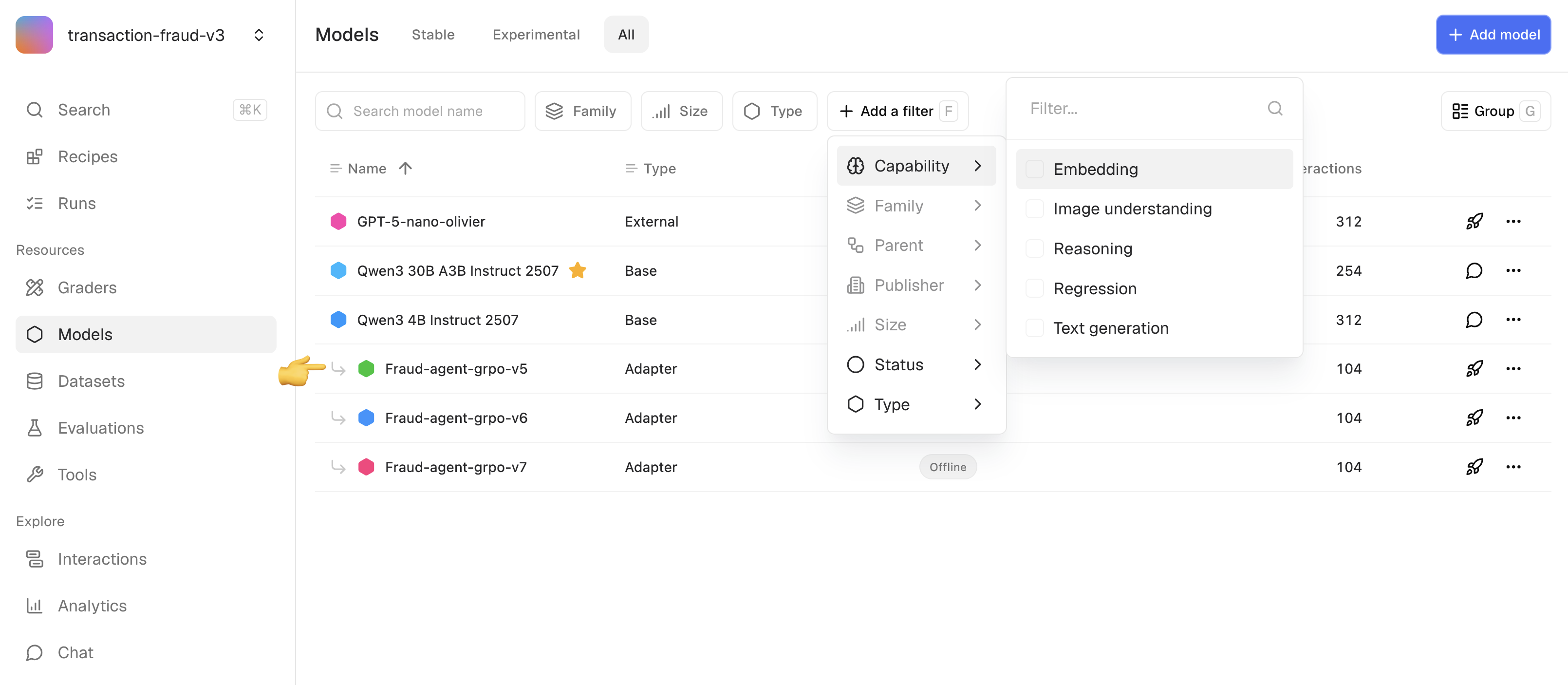

Deploy a model

Add a model to your project, then deploy it:| Parameter | Type | Required | Description |

|---|---|---|---|

model | str | Yes | Model key from the registry |

wait | bool | No | Block until model is online (default: False) |

make_default | bool | No | Set as default model for the project |

-multimodal) include a vision encoder that is automatically spawned alongside the decoder. The vision encoder is frozen during training. See Multimodal StringThread for supported models and image handling.See SDK Reference for all model methods.