- SDK

- UI

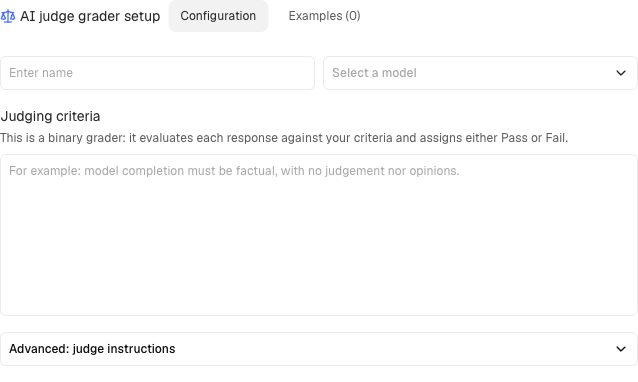

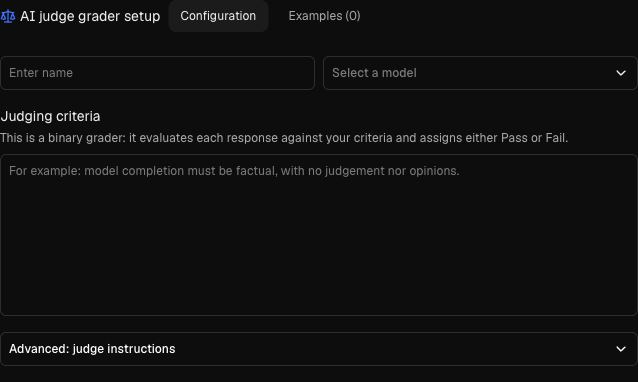

Create an AI judge

AI judges use an LLM to grade completions based on a criterion you define:| Parameter | Type | Required | Description |

|---|---|---|---|

key | str | Yes | Unique identifier |

criteria | str | Yes | What constitutes a pass (natural language) |

judge_model | str | Yes | Model to use as judge |

feedback_key | str | Yes | Feedback key to write scores to |

Prompt templates for AI judges

AI judges use Handlebars templates for their prompts. Template variables give you access to the conversation context, completion, and metadata.Basic syntax:All template variables

All template variables

| Variable | Description |

|---|---|

completion | The assistant’s completion being evaluated |

last_user_turn_content | Content of the final user turn |

context_str | Full conversation context as a formatted string |

context_str_without_last_user | Context excluding the final user turn |

turns | All turns as a list of {role, content} dicts |

context_turns | All turns except the completion |

context_turns_without_last_user | Context turns without the last user turn |

metadata | Thread metadata dict |

output_schema | Expected output JSON schema |

template_variables | Custom variables passed at judge initialization |

{{{var}}}) for variables that may contain HTML entities or special characters.Grader types

| Type | Method | Use when |

|---|---|---|

| AI judge | create.binary_judge() | Criteria can be expressed in natural language |

| Pre-built | create.prebuilt_judge() | RAG evaluation (faithfulness, relevancy) |

| External endpoint | create.external_endpoint() | Scoring requires an external system |

| Custom | create.custom() | Python logic in recipes |

Pre-built graders

For RAG applications, use pre-built graders optimized by Adaptive:- Faithfulness: Does the completion adhere to provided context?

- Context Relevancy: Is the retrieved context relevant to the query?

- Answer Relevancy: Does the completion answer the question?

How pre-built graders work

How pre-built graders work

Faithfulness breaks the completion into atomic claims and checks each against the context:Pass context as Completion: “Tim Berners-Lee published the first website in August 1990.”Score: 0.5 (first claim supported, date claim unsupported)

Context Relevancy checks if retrieved chunks are relevant to the query:

Answer Relevancy checks if the completion addresses the question:Extra information not requested by the user lowers the score.

document turns in the input messages. Each retrieved chunk should be a separate turn.Sample:Context Relevancy checks if retrieved chunks are relevant to the query:

Answer Relevancy checks if the completion addresses the question: