When you start a run for a recipe in Adaptive platform, that process is executed in the same infrastructure that hosts Adaptive Engine. This is the appropriate way to execute long running processes like training or evaluation recipes. However, when you first start writing a custom recipe or a grader, you might want to experiment and debug your logic locally before you neatly wrap the recipe in the appropriate launch syntax and parametrize its inputs. Thankfully,Documentation Index

Fetch the complete documentation index at: https://docs.adaptive-ml.com/llms.txt

Use this file to discover all available pages before exploring further.

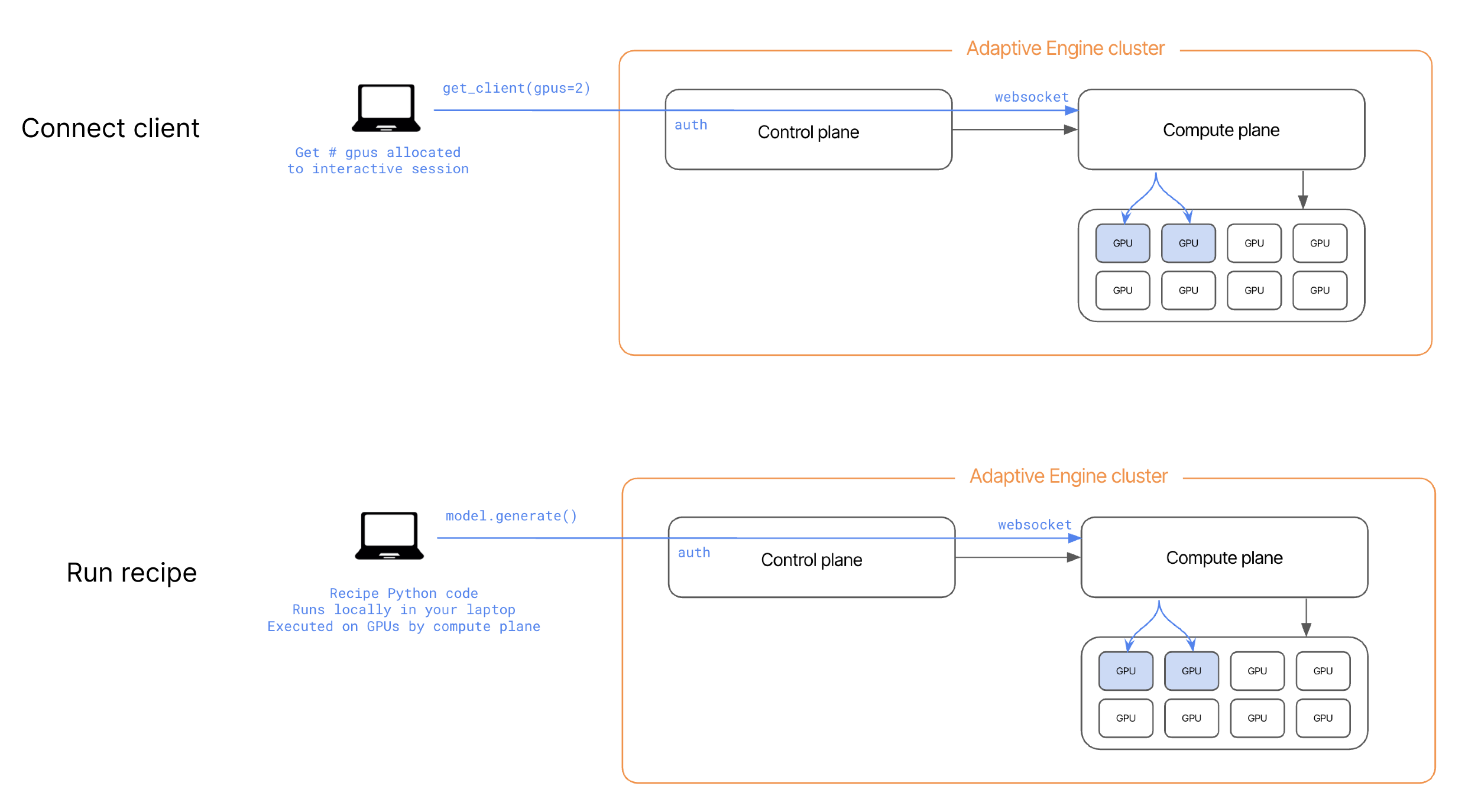

adaptive_harmony allows you to establish a direct connection via secure websockets between your local environment and the compute plane of your Adaptive Engines’ deployment. When you instantiate a HarmonyClient, a desired number of GPU’s is allocated directly to you as an interactive session. These GPU’s are freed to run other workloads or interactive sessions as soon as the local python process holding that client in memory is killed.

If you use

adaptive_harmony in a Jupyter Notebook, you can directly await async methods like get_client in a Jupyter cell (i.e. await get_client()), no need to use asyncio.spawn method to spawn a model on GPU, you’ll get back a new handle in Python to a remote model, such as TrainingModel or InferenceModel, which you can also call methods on (such as .generate(), .train_grpo(), .optim_step(), etc.). This create a hybrid development environment, where you can step through python recipe code locally in your IDE, but have powerful compute resources execute the methods that require them.

RecipeContext (ctx).